Node.js Error Handling That Doesn't Embarrass You in Production

Technical PM + Software Engineer

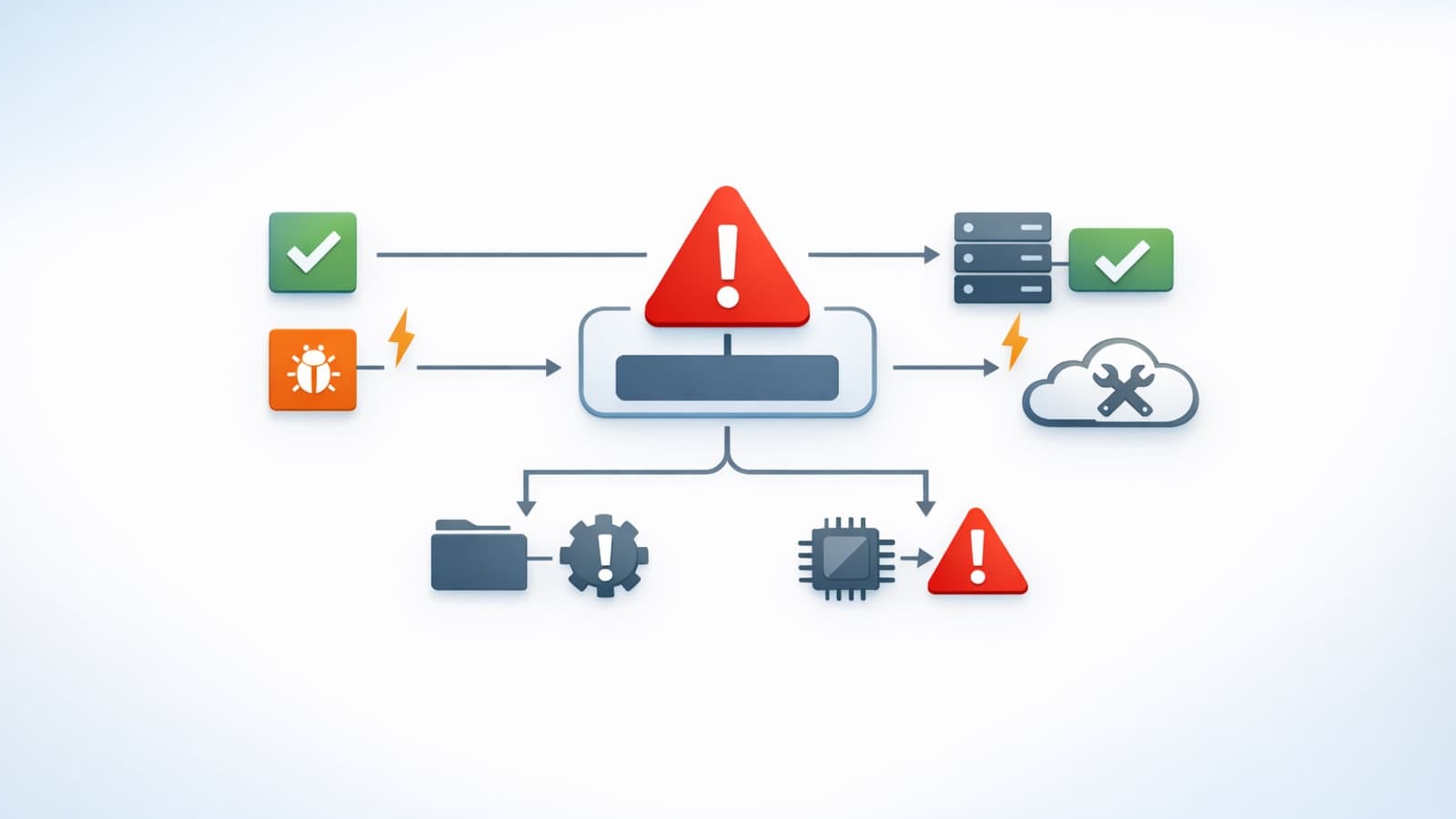

Many backend incidents are not caused by one dramatic failure. They are caused by inconsistent error behavior under normal pressure.

Typical symptoms:

- different routes return different error shapes,

- logs lack request context,

- status codes are overloaded,

- retries duplicate side effects,

- incident triage takes too long because failure semantics are unclear.

This guide is a practical error-handling model for Node.js services that improves reliability and operational clarity.

Principle: failure must be intentional

A mature service answers these questions consistently:

- what kind of failure is this?

- what should client receive?

- what should be logged/alerted?

- is retry safe?

- is this operational or programmer error?

If those answers vary by route, reliability degrades fast.

Pattern 1: explicit error taxonomy

Use a shared taxonomy:

- validation

- auth/forbidden

- not_found

- conflict

- rate_limit

- upstream

- internal

This drives response mapping, logging, and alerting policy.

Pattern 2: typed application error base

type ErrorKind =

| "validation"

| "auth"

| "forbidden"

| "not_found"

| "conflict"

| "rate_limit"

| "upstream"

| "internal";

class AppError extends Error {

constructor(

public kind: ErrorKind,

public code: string,

public status: number,

message: string,

public details?: unknown,

public isOperational = true,

options?: { cause?: unknown }

) {

super(message, options);

}

}

Typed errors prevent ad-hoc route behavior.

Pattern 3: centralized error middleware

Map errors in one place.

Response envelope should be stable:

{

"error": {

"code": "VALIDATION_ERROR",

"message": "Request validation failed",

"details": {},

"requestId": "req_abc123"

}

}

Unknown errors map to 500 INTERNAL_ERROR without leaking internals.

Pattern 4: validate at boundaries

Most avoidable 500s begin as invalid input accepted too deep.

Validate request payloads at ingress and fail fast with structured 4xx errors.

Pattern 5: preserve cause chains

Do not flatten errors into generic strings.

catch (err) {

throw new AppError("upstream", "UPSTREAM_FAILURE", 502, "Billing provider failed", undefined, true, { cause: err });

}

Causal chains dramatically improve incident diagnosis.

Pattern 6: structured logging with context

Error logs should include:

- requestId

- route/method

- user/actor where safe

- status/code/kind

- latency and dependency context

Avoid dumping secrets or raw PII.

Pattern 7: retry + idempotency discipline

Retries are useful only when:

- failure class is retryable,

- operation is idempotent,

- backoff/jitter exists.

For side-effecting operations, enforce idempotency keys.

Pattern 8: process-level policies

Define behavior for:

unhandledRejectionuncaughtException

Crash-and-restart policy for fatal programmer errors should be explicit and consistent with your runtime supervisor.

Pattern 9: operational mapping table

Maintain a shared code -> status mapping (e.g., NOT_FOUND -> 404, CONFLICT -> 409, UPSTREAM_TIMEOUT -> 504).

This keeps client behavior deterministic across services.

Pattern 10: failure-path testing

Test failure behavior directly:

- envelope shape tests,

- validation failure tests,

- upstream timeout mapping tests,

- auth/permission failure tests.

If errors are part of product behavior, they require first-class test coverage.

Reliability checks before shipping

Before shipping:

- central middleware handles all errors

- boundary validation is in place

- status/code mapping is consistent

- logs include request correlation data

- error internals are not leaked to clients

- retry policy is idempotency-aware

- failure-path tests exist

Closing

Reliable Node.js systems are not the ones with no errors. They are the ones with predictable failure behavior.

When classification, response mapping, and observability are standardized, incidents become shorter and trust in the platform increases.